The first part of our article series on “Storage on Demand” highlighted the conceptual advantages of flexible storage solutions. In the second part, we turn to the practical level: the physical fundamentals on which modern storage architectures are based.

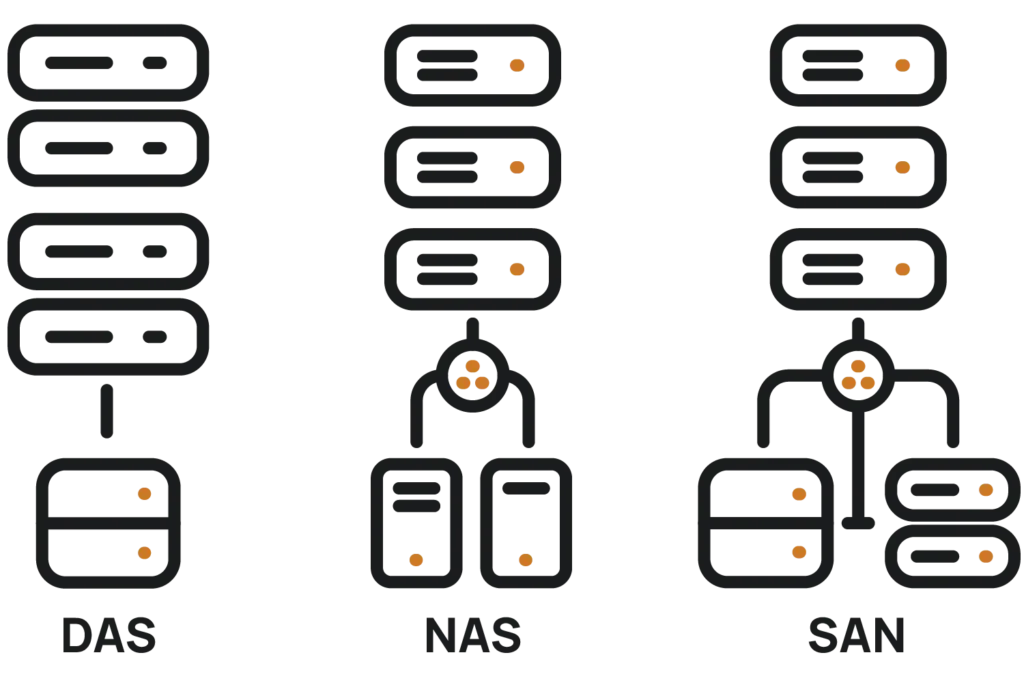

Fundamentally, there are three main forms: DAS (Direct Attached Storage), NAS (Network Attached Storage), and SAN (Storage Area Network). They define how storage is physically connected and made available (potentially within a network). They exhibit different characteristics regarding performance, scalability, reliability, and management.

At first glance, modern storage solutions appear abstract: resources can be scaled as needed, storage capacities assigned flexibly, and data moved dynamically. However, behind every on-demand model lies a physical infrastructure that is a decisive factor in how performant, reliable, and expandable a storage solution is. The basis for this is formed by various storage architectures: primarily DAS (Direct Attached Storage), NAS (Network Attached Storage), and SAN (Storage Area Network).

These architectures differ in how storage is connected, how many systems can access it simultaneously, and what role network, protocols, and management software play. The choice of the appropriate model depends heavily on the respective use case, both technically and economically.

Direct Attached Storage (DAS) refers to storage physically connected directly to a server, for example via SATA, SAS, or PCI Express. It is exclusively for local use and is not intended for access by other systems in the network. This architecture is simple, cost-effective, and enables very low latencies since no network communication is required.

A typical use case for DAS is a dedicated database server that exclusively handles local I/O loads. DAS also provides the appropriate solution for certain appliances or monitoring systems that do not need to store their data centrally.

However, the limitations are obvious: neither high availability nor shared use by multiple systems is readily possible. If the associated server fails, the storage is also no longer accessible. Additionally, there is a lack of scalability: the available storage space is limited to the drive slots present in the server.

Network Attached Storage (NAS) extends the concept by making storage available to multiple clients over the network. Access is file-based via protocols such as NFS (for Unix/Linux systems) or SMB (for Windows environments). From the clients’ perspective, the storage appears as an additional network drive and can be centrally managed.

Typical application scenarios are file-based workloads: For example, in a creative agency where several employees access graphics, presentations, or video files simultaneously. NAS is also widely used as a central backup target structure or for exchanging large amounts of data in office or development environments. NAS is a cost-effective and popular solution, especially for small and medium-sized enterprises, as it can be expanded with many additional functions and has a low financial barrier to entry.

The advantages lie in simple integration, central management, and shared use. At the same time, NAS is technically limited, particularly with regard to parallel access, high I/O loads, and performance requirements in virtualized environments. Furthermore, fault tolerance depends heavily on the specific implementation: a single NAS system can represent a Single Point of Failure (SPoF) if no redundancy mechanisms are in place.

A Storage Area Network (SAN) goes significantly beyond the capabilities of DAS and NAS. Unlike file-based access with NAS, access with SAN is block-based: at the level of individual data blocks, comparable to local hard drives. This enables higher performance values and finer control of storage access.

A SAN consists of a dedicated storage network that communicates via Fibre Channel, InfiniBand, or special protocols such as iSCSI. It is physically separated from the production network and designed for very high data rates and maximum fault tolerance. In larger data center environments, a SAN is often the central storage platform for virtualized server landscapes, databases, or business-critical applications.

For example: In a virtualized environment, dozens of virtual machines access shared data storage simultaneously. A SAN ensures that these accesses occur in parallel, with high performance and reliability, even under heavy load. Availability can be secured through redundant controllers, multipathing, and automatic failover.

For instance, CERN, the European Organization for Nuclear Research, operates a Ceph-based SAN cluster architecture with approximately 30 PB of usable storage and continuous read performance of about 30 GB/s to efficiently process the enormous amounts of data from the LHC experiments.

Another advantage is the flexibility in allocating and managing storage resources: virtual volumes can be dynamically provisioned, expanded, or migrated without physical intervention. At the same time, operating a SAN requires appropriate infrastructure, expertise, and investments in hardware, network components, and management tools.

DAS, NAS, and SAN form the physical foundation of every storage solution, including in the context of “Storage on Demand.” While DAS is suitable for locally limited scenarios (example: operating high-performance databases), NAS offers network-based access to shared files. SAN, in turn, provides a powerful and fault-tolerant solution for demanding workloads (such as at CERN’s particle accelerator).

Which architecture is deployed depends on both the specific use case and the requirements for performance, scalability, availability, and management. Modern storage platforms often combine multiple technologies and abstract them through central software management. This enables even complex storage landscapes to be operated on demand and flexibly.

You want to be digitally future-proof and maintain your data sovereignty?

Secure capacity now

in the AI-Ready Data Center in Rosbach near Frankfurt.

Start inquiry